-

-

Multimédia

-

This content has been machine translated dynamically.

Dieser Inhalt ist eine maschinelle Übersetzung, die dynamisch erstellt wurde. (Haftungsausschluss)

Cet article a été traduit automatiquement de manière dynamique. (Clause de non responsabilité)

Este artículo lo ha traducido una máquina de forma dinámica. (Aviso legal)

此内容已经过机器动态翻译。 放弃

このコンテンツは動的に機械翻訳されています。免責事項

이 콘텐츠는 동적으로 기계 번역되었습니다. 책임 부인

Este texto foi traduzido automaticamente. (Aviso legal)

Questo contenuto è stato tradotto dinamicamente con traduzione automatica.(Esclusione di responsabilità))

This article has been machine translated.

Dieser Artikel wurde maschinell übersetzt. (Haftungsausschluss)

Ce article a été traduit automatiquement. (Clause de non responsabilité)

Este artículo ha sido traducido automáticamente. (Aviso legal)

この記事は機械翻訳されています.免責事項

이 기사는 기계 번역되었습니다.책임 부인

Este artigo foi traduzido automaticamente.(Aviso legal)

这篇文章已经过机器翻译.放弃

Questo articolo è stato tradotto automaticamente.(Esclusione di responsabilità))

Translation failed!

Multimédia

La pile de la technologie HDX prend en charge la mise à disposition d’applications multimédias via deux approches complémentaires :

- Mise à disposition multimédia avec restitution côté serveur

- Redirection multimédia avec restitution côté client

Cette stratégie permet de vous assurer que vous pouvez mettre à disposition une gamme complète de formats multimédias, avec une expérience utilisateur optimale, lorsque vous maximisez la capacité à monter en charge du serveur pour réduire le coût par utilisateur.

Avec la mise à disposition de multimédia restitué par le serveur, le contenu audio et vidéo est décodé et restitué sur le serveur XenApp ou XenDesktop par l’application. Le contenu est ensuite compressé et distribué via le protocole ICA au Citrix Receiver sur la machine utilisateur. Cette méthode fournit le taux de compatibilité le plus élevé avec différentes applications et différents formats multimédia. Le traitement des vidéos étant consommateur de ressources, la mise à disposition de multimédia par restitution sur le serveur bénéficie de l’accélération matérielle intégrée. Par exemple, la prise en charge de l’accélération de vidéo DirectX (DXVA) diminue la charge de l’UC en effectuant le décodage H.264 sur un matériel distinct. Les technologies Intel Quick Sync et NVIDIA NVENC fournissaient l’encodage H.264 avec accélération matérielle.

Étant donné que la plupart des serveurs ne proposent pas l’accélération matérielle pour la compression vidéo, la capacité à monter en charge du serveur est affectée si l’intégralité du traitement vidéo est effectué sur l’UC du serveur. Pour conserver une capacité à monter en charge élevée du serveur, de nombreux formats multimédias peuvent être redirigés vers la machine utilisateur pour une restitution locale. La redirection Windows Media déleste le serveur pour un large éventail de formats multimédia généralement associés avec Windows Media Player.

La redirection Flash permet de rediriger du contenu vidéo Adobe Flash vers un lecteur Flash exécuté localement sur la machine utilisateur. La vidéo HTML5 est devenue populaire, et Citrix a introduit une technologie de redirection pour ce type de contenu. Vous pouvez aussi appliquer les technologies de redirection de contact générales Redirection hôte vers client et Local App Access au contenu multimédia.

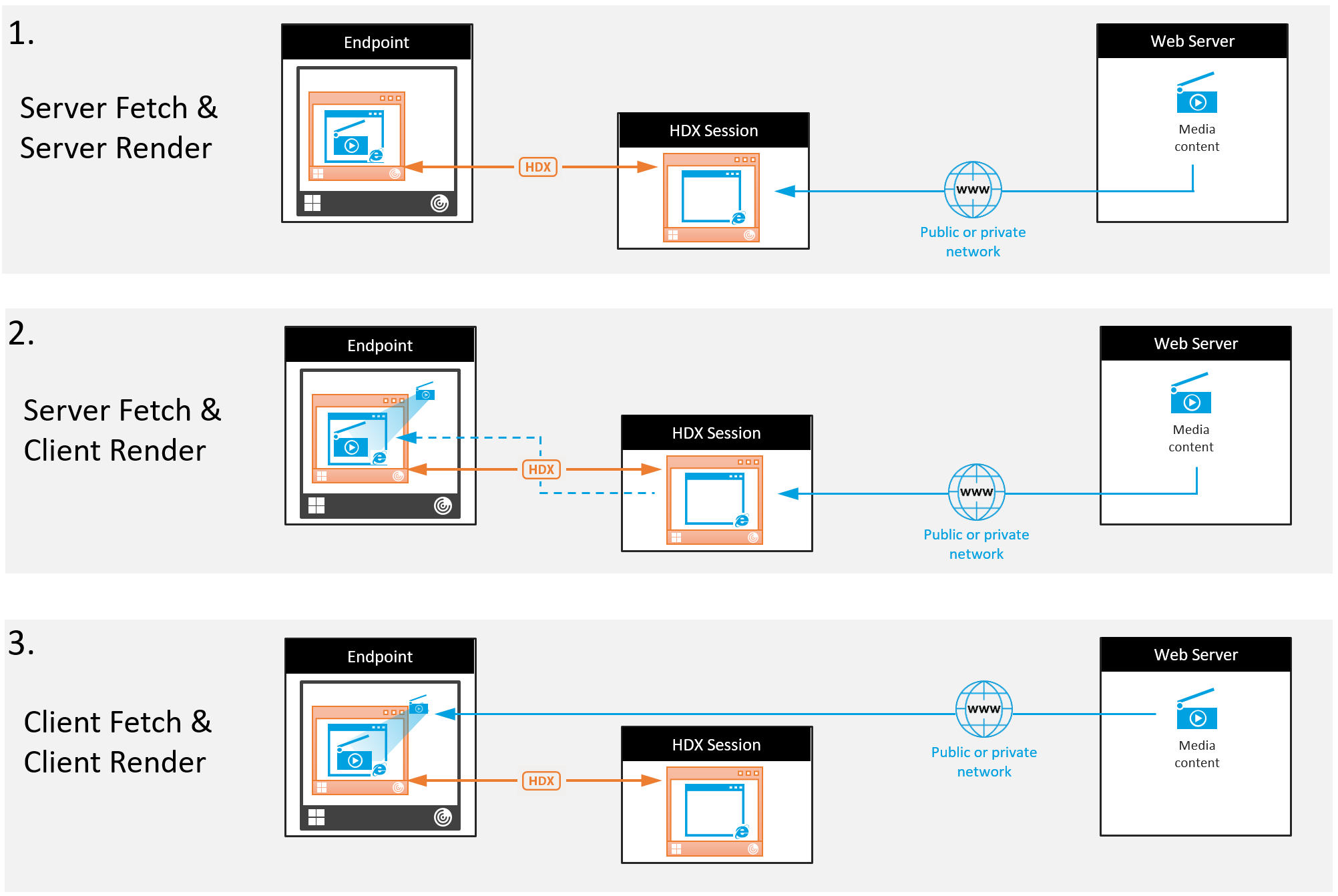

En combinant ces technologies, si vous ne configurez pas la redirection, HDX effectue la restitution côté serveur. Si vous configurez la redirection, HDX utilise la méthode Récupération serveur et restitution client ou Récupération client et restitution client. Si ces méthodes échouent, HDX retourne à la restitution du côté serveur si nécessaire et est régi par la stratégie de prévention du retour.

Exemples de scénarios

Scénario 1. (Récupération serveur, restitution serveur) :

- Le serveur récupère le fichier multimédia à partir de sa source, décode et présente le contenu sur un périphérique audio ou un périphérique d’affichage.

- Le serveur extrait l’image ou le son présenté(e) depuis le périphérique d’affichage ou le périphérique audio respectivement.

- Le serveur peut aussi le compresser et le transmettre ensuite au client.

Cette approche entraîne des coûts d’UC élevés, des coûts de bande passante élevés (si l’image ou le son extrait(e) n’est pas compressé(e) efficacement) et ne permet qu’une faible capacité à monter en charge.

Les canaux virtuels Audio et Thinwire utilisent cette approche. Cette approche a pour avantage de réduire la configuration matérielle et logicielle requise pour les clients. Avec cette approche, le décodage se produit sur le serveur et il fonctionne pour une plus grande variété de périphériques et de formats.

Scénario 2. (Récupération serveur, restitution client) :

Avec cette approche, le contenu multimédia doit pouvoir être intercepté avant d’être décodé et présenté au périphérique audio ou d’affichage. Le contenu audio/vidéo compressé est envoyé au client sur lequel il est ensuite décodé et présenté localement. L’avantage de cette approche est que le décodage et la présentation sont déchargés vers les machines clientes, réduisant les cycles d’UC sur le serveur.

Toutefois, elle requiert une configuration logicielle et matérielle supplémentaire pour le client. Le client doit pouvoir décoder chaque format qu’il est susceptible de recevoir.

Scénario 3. (Récupération client, restitution client) :

Avec cette approche, l’adresse URL du contenu multimédia doit pouvoir être interceptée avant d’être récupérée depuis la source. L’URL est envoyée au client sur lequel le contenu multimédia est récupéré, décodé et présenté localement. Cette approche repose sur un concept simple. Elle a pour avantage de diminuer les cycles d’UC sur le serveur et la bande passante car seules les commandes sont envoyées à partir du serveur. Toutefois, le contenu multimédia n’est pas toujours accessible par les clients.

Infrastructure et plate-forme

Les systèmes d’exploitation bureau (Windows, Mac OS X et Linux) offrent des infrastructures multimédias permettant le développement plus rapide et plus facile d’applications multimédias. Ce tableau répertorie certaines des infrastructures multimédias les plus populaires. Chaque infrastructure divise le traitement multimédia en plusieurs étapes et utilise une architecture basée sur pipeline.

| Infrastructure | Plateforme |

|---|---|

| DirectShow | Windows (98 et versions ultérieures) |

| Media Foundation | Windows (Vista et versions ultérieures) |

| Gstreamer | Linux |

| QuickTime | Mac OS X |

Prise en charge double hop avec les technologies de redirection multimédia

| Redirection de média | Assistance |

|---|---|

| Redirection HDX Flash | Non |

| Redirection Windows Media | Oui |

| Redirection vidéo HTML5 | Oui |

| Redirection audio | Non |

Informations connexes

Partager

Partager

This Preview product documentation is Citrix Confidential.

You agree to hold this documentation confidential pursuant to the terms of your Citrix Beta/Tech Preview Agreement.

The development, release and timing of any features or functionality described in the Preview documentation remains at our sole discretion and are subject to change without notice or consultation.

The documentation is for informational purposes only and is not a commitment, promise or legal obligation to deliver any material, code or functionality and should not be relied upon in making Citrix product purchase decisions.

If you do not agree, select I DO NOT AGREE to exit.