Local Host Cache

To ensure that the XenApp and XenDesktop Site database is always available, Citrix recommends starting with a fault-tolerant SQL Server deployment, by following high availability best practices from Microsoft. (The Databases section in the System requirements article lists the SQL Server high availability features supported in XenApp and XenDesktop.) However, network issues and interruptions may result in users not being able to connect to their applications or desktops.

The Local Host Cache (LHC) feature allows connection brokering operations in a XenApp or XenDesktop Site to continue when an outage occurs. An outage occurs when the connection between a Delivery Controller™ and the Site database fails. Local Host Cache engages when the site database is inaccessible for 90 seconds.

Local Host Cache is the most comprehensive high availability feature in XenApp and XenDesktop. It is a more powerful alternative to the connection leasing feature that was introduced in XenApp 7.6.

Although this Local Host Cache implementation shares the name of the Local Host Cache feature in XenApp 6.x and earlier XenApp releases, there are significant improvements. This implementation is more robust and immune to corruption. Maintenance requirements are minimized, such as eliminating the need for periodic dsmaint commands. This Local Host Cache is an entirely different implementation technically; read on to learn how it works.

Note:

Although connection leasing is supported in version 7.15 LTSR, it will be removed in the following release.

Data content

Local Host Cache includes the following information, which is a subset of the information in the main database:

- Identities of users and groups who are specifically assigned rights to resources published from the Site.

- Identities of users who are currently using, or who have recently used, published resources from the Site.

- Identities of VDA machines (including Remote PC Access machines) configured in the Site.

- Identities (names and IP addresses) of client Citrix Receiver™ machines being actively used to connect to published resources.

It also contains information for currently active connections that were established while the main database was unavailable:

- Results of any client machine endpoint analysis performed by Citrix Receiver.

- Identities of infrastructure machines (such as NetScaler Gateway and StoreFront™ servers) involved with the Site.

- Dates and times and types of recent activity by users.

How it works

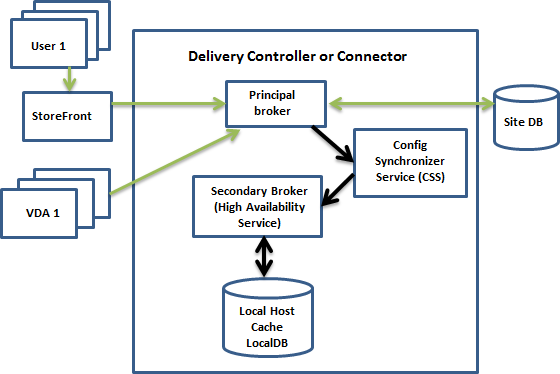

The following graphic illustrates the Local Host Cache components and communication paths during normal operations.

During normal operations:

- The principal broker (Citrix Broker Service) on a Controller accepts connection requests from StoreFront, and communicates with the Site database to connect users with VDAs that are registered with the Controller.

- A check is made every two minutes to determine whether changes have been made to the principal broker’s configuration. Those changes could have been initiated by PowerShell/Studio actions (such as changing a Delivery Group property) or system actions (such as machine assignments).

- If a change has been made since the last check, the principal broker uses the Citrix Config Synchronizer Service (CSS) to synchronize (copy) information to a secondary broker (Citrix High Availability Service) on the Controller. All broker configuration data is copied, not just items that have changed since the previous check. The secondary broker imports the data into a Microsoft SQL Server Express LocalDB database on the Controller. The CSS ensures that the information in the secondary broker’s LocalDB database matches the information in the Site database. The LocalDB database is re-created each time synchronization occurs.

- If no changes have occurred since the last check, no data is copied.

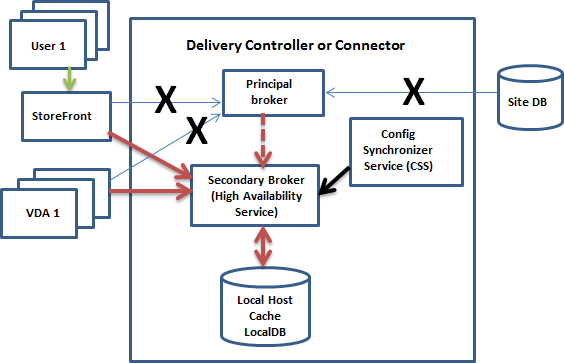

The following graphic illustrates the changes in communications paths if the principal broker loses contact with the Site database (an outage begins):

When an outage begins:

- The principal broker can no longer communicate with the Site database, and stops listening for StoreFront and VDA information (marked X in the graphic). The principal broker then instructs the secondary broker (High Availability Service) to start listening for and processing connection requests (marked with a red dashed line in the graphic).

- When the outage begins, the secondary broker has no current VDA registration data, but as soon as a VDA communicates with it, a re-registration process is triggered. During that process, the secondary broker also gets current session information about that VDA.

- While the secondary broker is handling connections, the principal broker continues to monitor the connection to the Site database. When the connection is restored, the principal broker instructs the secondary broker to stop listening for connection information, and the principal broker resumes brokering operations. The next time a VDA communicates with the principal broker, a re-registration process is triggered. The secondary broker removes any remaining VDA registrations from the previous outage, and resumes updating the LocalDB database with configuration changes received from the CSS.

In the unlikely event that an outage begins during a synchronization, the current import is discarded and the last known configuration is used.

The event log provides information about synchronizations and outages. See the “Monitor” section below for details.

You can also intentionally trigger an outage; see the “Force an outage” section below for details about why and how to do this.

Sites with multiple Controllers

Among its other tasks, the CSS routinely provides the secondary broker with information about all Controllers in the zone. (If your deployment does not contain multiple zones, this action affects all Controllers in the Site.) Having that information, each secondary broker knows about all peer secondary brokers.

The secondary brokers communicate with each other on a separate channel. They use an alphabetical list of FQDN names of the machines they’re running on to determine (elect) which secondary broker will be in charge of brokering operations in the zone if an outage occurs. During the outage, all VDAs re-register with the elected secondary broker. The non-elected secondary brokers in the zone will actively reject incoming connection and VDA registration requests.

If an elected secondary broker fails during an outage, another secondary broker is elected to take over, and VDAs will re-register with the newly-elected secondary broker.

During an outage, if a Controller is restarted:

- If that Controller is not the elected primary broker, the restart has no impact.

- If that Controller is the elected primary broker, a different Controller is elected, causing VDAs to re-register. After the restarted Controller powers on, it automatically takes over brokering, which causes VDAs to re-register again. In this scenario, performance may be affected during the re-registrations.

If you power off a Controller during normal operations and then power it on during an outage, Local Host Cache cannot be used on that Controller if it is elected as the primary broker.

The event log provides information about elections. See the “Monitor” section below.

Design considerations and requirements

Local Host Cache is supported for server-hosted applications and desktops, and static (assigned) desktops; it is not supported for pooled VDI desktops (created by MCS or PVS).

There is no time limit imposed for operating in outage mode. However, restore the site to normal operation as quickly as possible.

What is unavailable or changes during an outage:

- You cannot use Studio or run PowerShell cmdlets.

- Hypervisor credentials cannot be obtained from the Host Service. All machines are in the unknown power state, and no power operations can be issued. However, VMs on the host that are powered-on can be used for connection requests.

- An assigned machine can be used only if the assignment occurred during normal operations. New assignments cannot be made during an outage.

- Automatic enrollment and configuration of Remote PC Access machines is not possible. However, machines that were enrolled and configured during normal operation are usable.

- Server-hosted applications and desktop users may use more sessions than their configured session limits, if the resources are in different zones.

- Users can launch applications and desktops only from registered VDAs in the zone containing the currently active/elected (secondary) broker. Launches across zones (from a broker in one zone to a VDA in a different zone) are not supported during an outage.

By default, power-managed desktop VDAs in pooled Delivery Groups that have the ShutdownDesktopsAfterUse property enabled are placed into maintenance mode when an outage occurs. You can change this default, to allow those desktops to be used during an outage. However, you cannot rely on the power management during the outage. (Power management resumes after normal operations resume.) Also, those desktops might contain data from the previous user, because they have not been restarted.

To override the default behavior, you must enable it site-wide and for each affected Delivery Group.

For the site, run the following PowerShell cmdlet:

Set-BrokerSite -ReuseMachinesWithoutShutdownInOutageAllowed $true

For each affected Delivery Group, run the following PowerShell cmdlet:

Set-BrokerDesktopGroup -Name "\<*name*\>" -ReuseMachinesWithoutShutdownInOutage $true

Enabling this feature in the Site and the Delivery Groups does not affect how the configured ShutdownDesktopsAfterUse property works during normal operations.

RAM size:

The LocalDB service can use approximately 1.2 GB of RAM (up to 1 GB for the database cache, plus 200 MB for running SQL Server Express LocalDB). The High Availability Service can use up to 1 GB of RAM if an outage lasts for an extended interval with many logons occurring (for example, 12 hours with 10K users). These memory requirements are in addition to the normal RAM requirements for the Controller, so you might need to increase the total amount of RAM capacity.

Note that if you use a SQL Server Express installation for the Site database, the server will have two sqlserver.exe processes.

CPU core and socket configuration:

A Controller’s CPU configuration, particularly the number of cores available to the SQL Server Express LocalDB, directly affects Local Host Cache performance, even more so than memory allocation. This CPU overhead is observed only during the outage period when the database is unreachable and the High Availability service is active.

While LocalDB can use multiple cores (up to 4), it’s limited to only a single socket. Adding more sockets will not improve the performance (for example, having 4 sockets with 1 core each). Instead, Citrix recommends using multiple sockets with multiple cores. In Citrix testing, a 2x3 (2 sockets, 3 cores) configuration provided better performance than 4x1 and 6x1 configurations.

Storage:

As users access resources during an outage, the LocalDB grows. For example, during a logon/logoff test running at 10 logons per second, the database grew by one MB every 2-3 minutes. When normal operation resumes, the local database is recreated and the space is returned. However, the broker must have sufficient space on the drive where the LocalDB is installed to allow for the database growth during an outage. Local Host Cache also incurs additional I/O during an outage: approximately 3 MB of writes per second, with several hundred thousand reads.

Performance:

During an outage, one broker handles all the connections, so in Sites (or zones) that load balance among multiple Controllers during normal operations, the elected broker might need to handle many more requests than normal during an outage. Therefore, CPU demands will be higher. Every broker in the Site (zone) must be able to handle the additional load imposed by LocalDB and all of the affected VDAs, because the broker elected during an outage could change.

VDI limits:

- In a single-zone VDI deployment, up to 10,000 VDAs can be handled effectively during an outage.

- In a multi-zone VDI deployment, up to 10,000 VDAs in each zone can be handled effectively during an outage, to a maximum of 40,000 VDAs in the site. For example, each of the following sites can be handled effectively during an outage:

- A site with four zones, each containing 10,000 VDAs.

- A site with seven zones, one containing 10,000 VDAs, and six containing 5,000 VDAs each.

During an outage, load management within the Site may be affected. Load evaluators (and especially, session count rules) may be exceeded.

During the time it takes all VDAs to re-register with a broker, that broker might not have complete information about current sessions. So, a user connection request during that interval could result in a new session being launched, even though reconnection to an existing session was possible. This interval (while the “new” broker acquires session information from all VDAs during re-registration) is unavoidable. Note that sessions that are connected when an outage starts are not impacted during the transition interval, but new sessions and session reconnections could be.

This interval occurs whenever VDAs must re-register with a different broker:

- An outage starts: When migrating from a principal broker to a secondary broker.

- Broker failure during an outage: When migrating from a secondary broker that failed to a newly-elected secondary broker.

- Recovery from an outage: When normal operations resume, and the principal broker resumes control.

You can decrease the interval by lowering the Citrix Broker Protocol’s HeartbeatPeriodMs registry value (default = 600000 ms, which is 10 minutes). This heartbeat value is double the interval the VDA uses for pings, so the default value results in a ping every 5 minutes.

For example, the following command changes the heartbeat to five minutes (300000 milliseconds), which results in a ping every 2.5 minutes:

New-ItemProperty -Path HKLM:\SOFTWARE\Citrix\DesktopServer -Name HeartbeatPeriodMs -PropertyType DWORD –Value 300000

The interval cannot be eliminated entirely, no matter how quickly the VDAs register.

The time it takes to synchronize between brokers increases with the number of objects (such as VDAs, applications, groups). For example, synchronizing 5000 VDAs might take ten minutes or more to complete. See the “Monitor” section below for information about synchronization entries in the event log.

Manage Local Host Cache

For Local Host Cache to work correctly, the PowerShell execution policy on each Controller must be set to RemoteSigned, Unrestricted, or Bypass.

SQL Server Express LocalDB

The Microsoft SQL Server Express LocalDB that Local Host Cache uses is installed automatically when you install a Controller or upgrade a Controller from a version earlier than 7.9. There is no administrator maintenance needed for the LocalDB. Only the secondary broker communicates with this database; you cannot use PowerShell cmdlets to change anything about this database. The LocalDB cannot be shared across Controllers.

The SQL Server Express LocalDB database software is installed regardless of whether Local Host Cache is enabled.

To prevent its installation, install or upgrade the Controller using the XenDesktopServerSetup.exe command, and include the /exclude “Local Host Cache Storage (LocalDB)” option. However, keep in mind that the Local Host Cache feature will not work without the database, and you cannot use a different database with the secondary broker.

Installation of this LocalDB database has no effect on whether or not you install SQL Server Express for use as the site database.

Default settings after XenApp or XenDesktop® installation and upgrade

During a new installation of XenApp® and XenDesktop, Local Host Cache is enabled by default. (Connection leasing is disabled by default.)

After an upgrade, the Local Host Cache setting is unchanged. For example, if Local Host Cache was enabled in the earlier version, it remains enabled in the upgraded version. If Local Host Cache was disabled (or not supported) in the earlier version, it remains disabled in the upgraded version.

Enable and disable Local Host Cache

To enable Local Host Cache, enter:

Set-BrokerSite -LocalHostCacheEnabled $true -ConnectionLeasingEnabled $false

This cmdlet also disables the connection leasing feature. Do not enable both Local Host Cache and connection leasing.

To determine whether Local Host Cache is enabled, enter:

Get-BrokerSite

Check that the LocalHostCacheEnabled property is True, and that the ConnectionLeasingEnabled property is False.

To disable Local Host Cache (and enable connection leasing), enter:

Set-BrokerSite -LocalHostCacheEnabled $false -ConnectionLeasingEnabled $true

Verify that Local Host Cache is working

To verify that Local Host Cache is set up and working correctly:

- Ensure that synchronization imports complete successfully. Check the event logs.

- Ensure that the SQL Server Express LocalDB database was created on each Delivery Controller. This confirms that the High Availability Service can take over, if needed.

- On the Delivery Controller server, browse to C:\Windows\ServiceProfiles\NetworkService.

- Verify that HaDatabaseName.mdf and HaDatabaseName_log.ldf are created.

- Force an outage on the Delivery Controllers. After you’ve verified that Local Host Cache works, remember to place all of the Controllers back into normal mode. This can take approximately 15 minutes, to avoid VDA registration storms.

Force an outage

You might want to deliberately force a database outage.

- If your network is going up and down repeatedly. Forcing an outage until the network issues resolve prevents continuous transition between normal and outage modes.

- To test a disaster recovery plan.

- While replacing or servicing the site database server.

To force an outage, edit the registry of each server containing a Delivery Controller.

- In HKLM\Software\Citrix\DesktopServer\LHC, set OutageModeForced to 1. This instructs the broker to enter outage mode, regardless of the state of the database. (Setting the value to 0 takes the server out of outage mode.)

- In a Citrix Cloud™ scenario, the connector enters outage mode, regardless of the state of the connection to the control plane or primary zone.

Monitor

Event logs indicate when synchronizations and outages occur.

Config Synchronizer Service:

During normal operations, the following events can occur when the CSS copies and exports the broker configuration and imports it to the LocalDB using the High Availability Service (secondary broker).

- 503: A change was found in the principal broker configuration, and an import is starting.

- 504: The broker configuration was copied, exported, and then imported successfully to the LocalDB.

- 505: An import to the LocalDB failed; see below for more information.

- 510: No Configuration Service configuration data received from primary Configuration Service.

- 517: There was a problem communicating with the primary Broker.

- 518: Config Sync script aborted because the secondary Broker (High Availability Service) is not running.

High Availability Service:

- 3502: An outage occurred and the secondary broker (High Availability Service) is performing brokering operations.

- 3503: An outage has been resolved and normal operations have resumed.

- 3504: Indicates which secondary broker is elected, plus other brokers involved in the election.

Troubleshoot

Several troubleshooting tools are available when an synchronization import to the LocalDB fails and a 505 event is posted.

CDF tracing: Contains options for the ConfigSyncServer and BrokerLHC modules. Those options, along with other broker modules, will likely identify the problem.

Report: If a synchronization import fails, you can generate a report. This report stops at the object causing the error. This report feature affects synchronization speed, so Citrix recommends disabling it when not in use.

To enable and produce a CSS trace report, enter the following command:

New-ItemProperty -Path HKLM:\SOFTWARE\Citrix\DesktopServer\LHC -Name EnableCssTraceMode -PropertyType DWORD -Value 1

The HTML report is posted at C:\\Windows\\ServiceProfiles\\NetworkService\\AppData\\Local\\Temp\\CitrixBrokerConfigSyncReport.html.

After the report is generated, enter the following command to disable the reporting feature:

Set-ItemProperty -Path HKLM:\SOFTWARE\Citrix\DesktopServer\LHC -Name EnableCssTraceMode -Value 0

Export the broker configuration: Provides the exact configuration for debugging purposes.

Export-BrokerConfiguration | Out-File file-pathname

For example, Export-BrokerConfiguration | Out-File C:\\BrokerConfig.xml.

In this article

- Data content

- How it works

- Sites with multiple Controllers

- Design considerations and requirements

- Manage Local Host Cache

- SQL Server Express LocalDB

- Default settings after XenApp or XenDesktop® installation and upgrade

- Enable and disable Local Host Cache

- Verify that Local Host Cache is working

- Force an outage

- Monitor

- Troubleshoot